Xiaomi has officially unveiled its latest advancement in artificial intelligence, the MiMo-V2.5, a large multimodal model (LMM) poised to compete at the forefront of AI development. This new iteration is engineered from the ground up to process and understand not only text but also images and videos concurrently within a single, integrated system. Furthermore, MiMo-V2.5 boasts enhanced agentic capabilities, empowering it to execute complex tasks with significantly reduced manual instruction, marking a substantial leap in AI autonomy and efficiency.

The release of MiMo-V2.5 places Xiaomi directly into the competitive arena of large language models (LLMs) and advanced AI systems. The company has strategically positioned this model to challenge established players by emphasizing its multimodal nature and sophisticated agentic functions. This dual focus suggests a broader ambition for Xiaomi in the AI landscape, moving beyond consumer electronics to become a significant contributor to foundational AI research and development.

Comparative Performance and Strategic Positioning

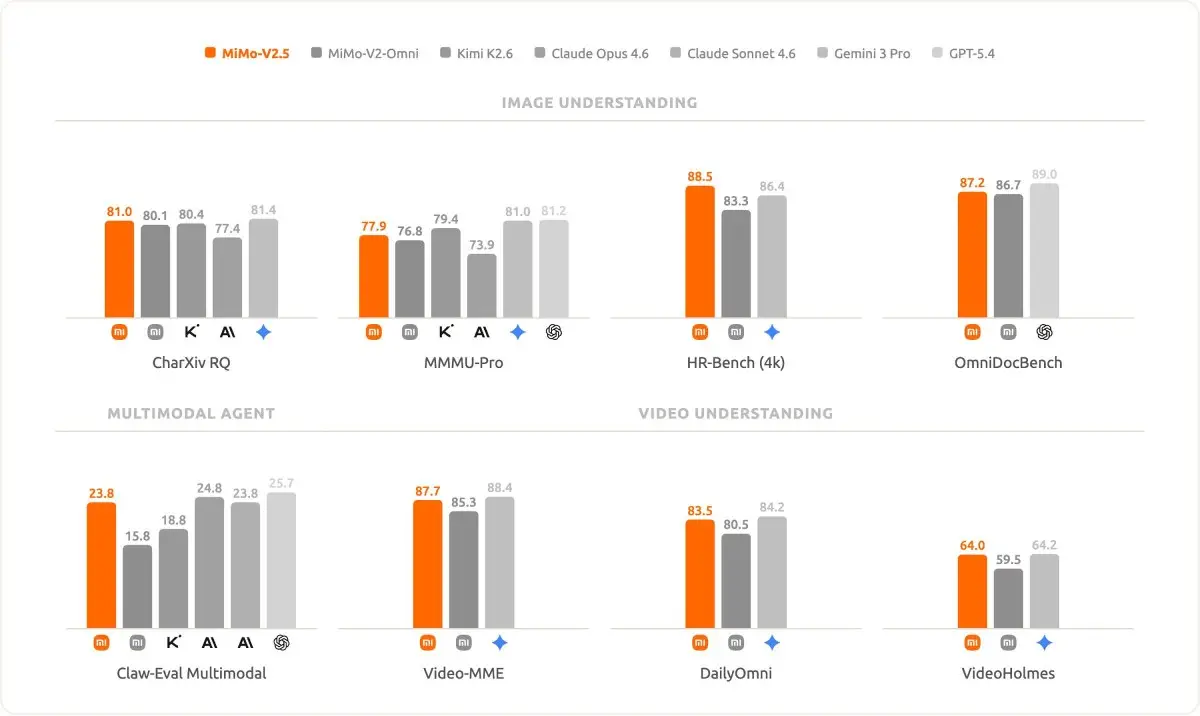

To ascertain MiMo-V2.5’s standing within the rapidly evolving AI ecosystem, Xiaomi has conducted extensive benchmark tests. The model was rigorously compared against several leading large models, including DeepSeek-V4, Kimi K2.6, Claude Opus 4.6, and Google’s Gemini 3.1 Pro. Initial results, as presented by Xiaomi, indicate that MiMo-V2.5 demonstrates highly competitive performance, particularly in tasks requiring precise instruction execution. This suggests that the model’s architecture and training methodologies are robust enough to rival, and in some specific areas, potentially surpass, existing state-of-the-art systems.

A particularly noteworthy finding emerged from Xiaomi’s internal coding benchmark tests. The standard version of MiMo-V2.5 was observed to achieve performance levels comparable to the "Pro" versions of other models, but crucially, with a substantially lower computational resource requirement. This efficiency is a significant differentiator, suggesting that MiMo-V2.5 is not merely a product of brute-force scaling but has been optimized for performance without an exorbitant demand for processing power. This efficiency could translate to wider accessibility and more cost-effective deployment for businesses and developers.

The implications of this efficiency are profound. In a field where computational resources often dictate the feasibility of deploying advanced AI, a model that delivers high performance with lower resource needs has a significant advantage. This could democratize access to sophisticated AI capabilities, enabling smaller organizations or those with budget constraints to leverage cutting-edge technology. Furthermore, it aligns with growing industry trends towards sustainable AI development, minimizing the environmental impact associated with large-scale AI computation.

Multimodal Prowess: Beyond Text

Beyond its textual processing abilities, MiMo-V2.5 exhibits impressive proficiency in understanding and interpreting visual data, including images and videos. Xiaomi reports that the model’s performance in these multimodal tasks is on par with proprietary, closed-source models that have historically dominated this domain. This balanced approach across different data modalities is a critical aspect of modern AI, enabling more comprehensive and nuanced understanding of complex real-world scenarios.

The ability to seamlessly integrate and process information from text, images, and videos opens up a vast array of potential applications. For instance, in customer service, an AI could analyze a user’s uploaded image of a faulty product alongside a textual description of the issue to provide more accurate troubleshooting. In education, students could query an AI about specific elements within an image or video, receiving detailed explanations. In content creation, an AI could generate descriptive text for an image or even create a short video based on a textual prompt.

The training regimen for MiMo-V2.5 involved an immense dataset of 48 trillion tokens. This staggering scale underscores the depth and breadth of the model’s learning, enabling it to capture intricate patterns and relationships across diverse forms of data. Xiaomi has released two distinct variants of MiMo-V2.5, differentiated by their parameter counts and active capacities, catering to different application needs and resource availabilities.

A significant feature of MiMo-V2.5 is its extended context window, capable of handling up to 1 million tokens. This is a crucial advancement, as it allows the model to maintain context over extremely long inputs, such as lengthy documents, extensive conversations, or lengthy video analyses, without losing track of the overall narrative or crucial details. This capability is vital for tasks requiring deep comprehension and long-term memory, such as legal document analysis, complex research summarization, or continuous monitoring of intricate processes.

The Challenge of Hardware Requirements

While MiMo-V2.5 offers a compelling suite of capabilities and an open-weight approach, its deployment presents significant hardware challenges. Xiaomi acknowledges that running the model locally requires substantial computational power, stating that even high-end GPUs like the RTX 5090 may not be sufficient for optimal performance. This suggests that for many users and organizations, direct local deployment might be impractical in the immediate term.

The more accessible pathways for engaging with MiMo-V2.5 currently lie through Xiaomi’s API services or its AI Studio platform. However, these platforms are noted to have experienced periods of instability, a common challenge for emerging AI services as they scale and refine their infrastructure. The reliance on cloud-based access, while more feasible for many, also introduces considerations regarding data privacy, latency, and ongoing service costs.

The demand for such powerful hardware underscores the ongoing arms race in AI development, where significant investment in specialized computing infrastructure is often a prerequisite for harnessing the full potential of cutting-edge models. This also highlights a potential disparity in access, where organizations with vast financial and technical resources will be better positioned to leverage these advanced AI systems initially.

Open-Weight Approach and Future Implications

Xiaomi’s decision to release MiMo-V2.5 with an open-weight approach is a strategic move that could significantly impact the AI landscape. Open-weight models, where the model weights are made publicly available, foster greater transparency, customization, and community-driven innovation. This contrasts with closed-source models, which are often proprietary and accessible only through controlled APIs.

This open approach is likely to appeal to a wide range of developers and researchers seeking greater flexibility and control over their AI implementations. It allows for fine-tuning the model for specific industry verticals, developing novel applications without vendor lock-in, and contributing to the collective advancement of AI technology. The availability of such powerful open-weight models can accelerate the pace of innovation, as a broader community can experiment, build upon, and identify potential improvements or vulnerabilities.

The success of MiMo-V2.5 in gaining widespread adoption will depend on several factors. Firstly, the stability and performance of Xiaomi’s cloud platforms will be crucial for users opting for API access. Secondly, the community’s ability to effectively leverage the open-weight model for diverse applications will be a key indicator of its practical utility. Finally, the ongoing evolution of hardware and the development of more efficient AI inference techniques could eventually make local deployment more feasible for a wider audience.

The emergence of models like MiMo-V2.5, with their advanced multimodal capabilities, agentic functions, and commitment to open-weight principles, signals a maturing AI industry. It suggests a future where AI is not only more intelligent and versatile but also more accessible and adaptable, fostering a collaborative environment for innovation and pushing the boundaries of what artificial intelligence can achieve. The long-term impact of this development will be closely watched as it navigates the competitive landscape against established proprietary AI services.